We are big fans of Optimizely for running A/B tests on web pages. Like Qualaroo, it is one of the tools that empower marketers to be less dependent on engineering to improve website conversion rates. In fact, the two tools become even more powerful when you use them together. Many of our customers use Qualaroo to identify conversion issues and then apply those insights to their next Optimizely test.

For example, a Qualaroo question can appear at the point where someone is about to download software asking: “Is there anything preventing you from downloading the software at this point?” The answers to this question can help you create a much more effective test version of the download page that addresses real customer issues. One customer recently shared that this approach helped them create a page variation that doubled the download rate in a single test.

Since so many of our customers use both Qualaroo and Optimizely, we’ve now made it easier to get even more value from the combined toolset.

Target Surveys to a Specific Optimizely Variation

You can now target a survey to visitors who are assigned to a particular variation of an Optimizely experiment.

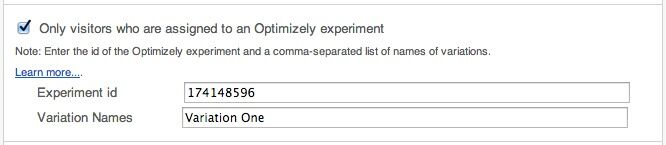

How to Configure This

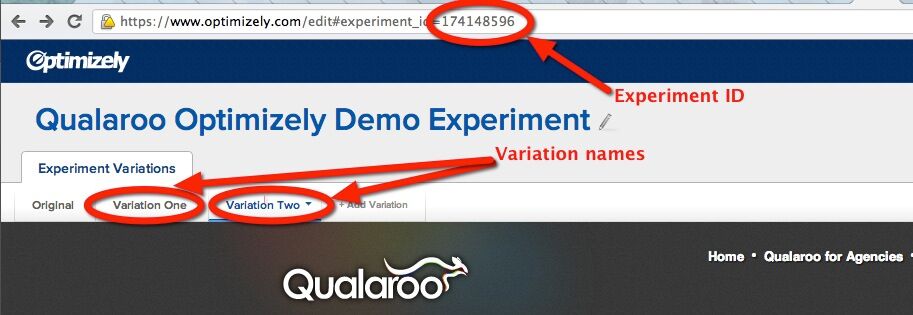

- Find the experiment ID and the variation names in your Optimizely dashboard:

- Enter the id of the Optimizely experiment and the variation name in survey configuration:

What Can You Do With It?

This integration allows you to do two things:

1. Use Qualaroo surveys to understand why one Optimizely variation performs better

Suppose you run an A/B test on a page that explains the pricing of your product, and a certain variation ends up converting better. Knowing why makes it easier to evolve the winning variation further. This is especially true when the difference in performance is not drastic. Ask your visitors who saw each variation – “Is our pricing clear? If not, what did you find confusing?” Targeting a Qualaroo survey to users who saw a particular version of the page helps inform your next experiment.

How to do it?

Create a survey for each variation. This means that the survey will be displayed only when (and where) this experiment is active. Our delay option can give your visitors enough time to read the text before you ask the question.

2. Use Optimizely to A/B test Qualaroo surveys

Several customers expressed their interest in A/B testing surveys against each other in order to find the wording and the order of questions that gets the most high quality responses. Now you can use Optimizely to run this experiment.

How to do it?

Create an experiment in Optimizely with two variations. The variations will not modify the page itself. Configure two surveys on the same page. Target each to a different variation. Done. Now Optimizely will assign some users to one survey and some to the other. Wait for enough data and analyze the number and quality of responses that each version of the survey received.

This feature is available in our Small Business and Professional plans. The plans come with a 30 day free trial – sign up and contact us at support@qualaroo.com to enable this feature for you. If you are already on one of these plans, just email support if you would like to give this a try.

Want insights that improve experience & conversions?

Capture customer feedback to improve customer experience & grow conversions.